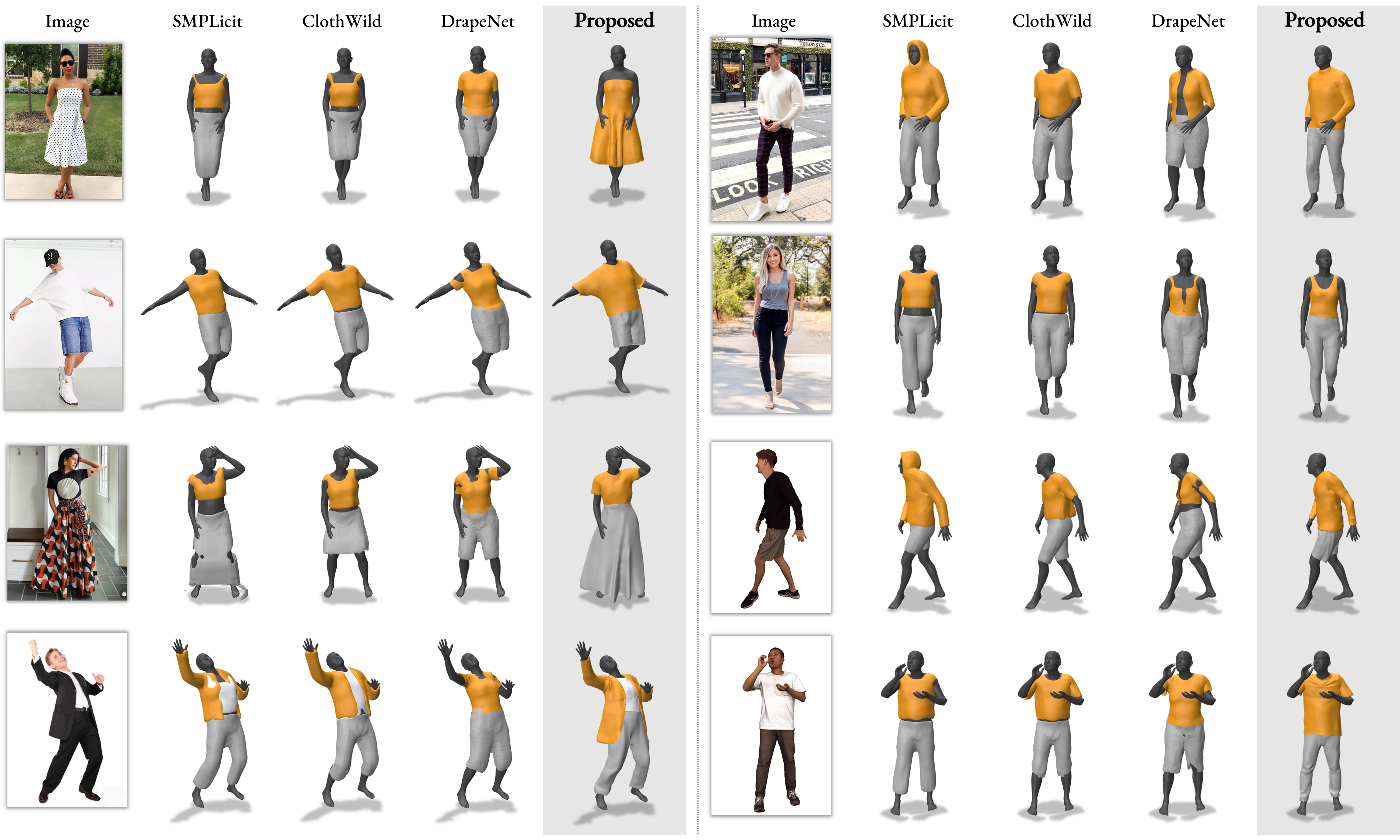

In recent years, there has been a significant shift in the field of digital avatar research, towards modeling, animating and reconstructing clothed human representations, as a key step towards creating realistic avatars. However, current methods for 3D cloth generative models are garment specific or trained completely on synthetic data, hence lacking fine details and realism. In this work, we make a step towards automatic realistic garment design and propose Design2Cloth, a high fidelity 3D generative model trained on a real world dataset from more than 2000 subject scans. To provide vital contribution to the fashion industry, we developed a user-friendly adversarial model capable of generating diverse and detailed clothes simply by drawing a 2D cloth mask. Under a series of both qualitative and quantitative experiments, we showcase that Design2Cloth outperforms current state-of-the-art cloth generative models by a large margin. In addition to the generative properties of our network, we showcase that the proposed method can be used to achieve high quality reconstructions from single in-the-wild images and 3D scans.

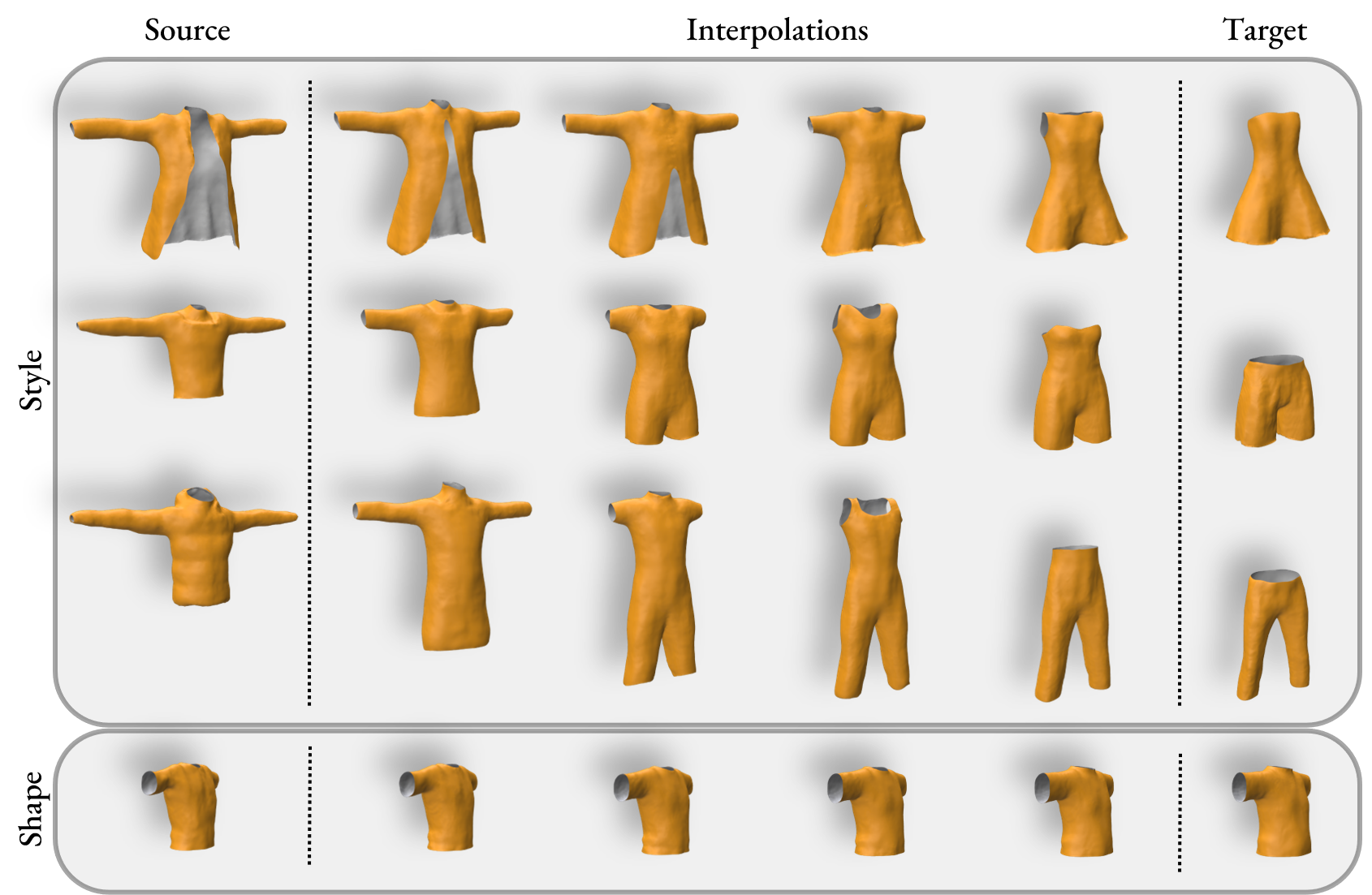

Design2Cloth is a large-scale style and shape model of cloths. Design2Cloth is composed by more than 2000 unique garments worn by 2010 distinct identities with various genders, ages, heights and weights. The model enables modeling and generation of diverse and highly detailed clothes tackling the limitation of previous models to accurately model diverse cloths that follow the real-world distribution.

To create Design2Cloth, we built an automated pipeline to extract cloth meshes from the collected subject scans. We utilized the triplane representation and a dual-resolution discriminator to model various styles and enforce wrinkle details of the generated cloths. The proposed model manages to achieve reconstruction garments with realistic creases.

Models and data along with their corresponding derivatives are used for non-commercial research and education purposes only. You agree not copy, sell, trade, or exploit the model for any commercial purposes. In any published research using the models or data, you cite the following paper:

Design2Cloth: 3D Cloth Generation from 2D Masks, J. Zheng, RA Potamias and S. Zafeiriou, Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), June, 2024

@InProceedings{Zheng_2024_CVPR,

author = {Zheng, Jiali and Potamias, Rolandos Alexandros and Zafeiriou, Stefanos},

title = {Design2Cloth: 3D Cloth Generation from 2D Masks},

booktitle = {Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)},

month = {June},

year = {2024},

pages = {1748-1758}

}